Benchmarking Java logging frameworks

Two years ago, Loggly ran a series of performance tests on some of the most popular Java logging frameworks at the time. These tests were designed to show how quickly each framework processed different kinds of log events, as well as the impact on the overall application. This is a follow-up to that test using the latest versions of each framework.

Note: This test uses a different benchmarking application than the previous test from 2015. You should treat these results independently of our last results. You can find a full breakdown of our testing methodology, including source code, below.

The contenders

For this test, we investigated three of the most commonly used Java logging frameworks:

- Log4j 2.8.2

- Logback 1.2.3 using SLF4J 1.7.25

- JUL (

java.util.logging)

We removed Log4j 1 from this test after it reached its end of life in August of 2015.

We tested each framework using file and socket appenders. For syslog appenders, we sent log data to a local rsyslog server over both TCP and UDP. We also tested asynchronous logging using each framework’s respective AsyncAppender. Additionally, we tested Log4j 2’s asynchronous loggers for both file and syslog appenders.

Setup and configuration

Our goal was to measure the amount of time needed to log a number of events. We chose to keep each framework as close to its default configuration as possible, and any configuration changes we made were reflected across all three frameworks (if possible). You might experience a boost in performance or reliability by tweaking your framework to suite your application.

The test project

Our application logged a total of 1,000,000 INFO events over 10 iterations (we actually performed 11 iterations, but the first was discarded due to large startup times to warm the JIT). To simulate a workload, we started multiple background threads that simply generated prime numbers. We repeated this test three times and averaged the results. While this drove the logging frameworks harder than in a typical workload, we wanted to push them to their limit. In a typical workload, you may not see as many dropped events, because events will be more spread out over time, allowing the system to catch up.

You can find the full source of the benchmarking project on GitHub.

Hardware and software

| Hardware | Intel Core i7-4500U (2 cores, 4 threads), 16GB RAM, SSD |

| OS | 64-bit Ubuntu 16.04, LXDE desktop |

| JDK version | OpenJDK 1.8.0_131 |

Frameworks

The configuration files for each framework can be found in the src/main/resources directory in the test project folder. The application loads the appropriate file on startup depending on the parameters passed to the JVM.

Appender configuration

We configured our file appenders to append entries to a single file using a PatternLayout of %d{HH:mm:ss.SSS} %-5level - %msg%n. Our syslog appenders sent log data to a local rsyslog server, which recorded the events in /var/log/syslog.

By default, asynchronous appenders have a queue size of 128 events for Log4j 2 and 256 events for Logback. We increased this limit to 1,000,000 for both frameworks. Additionally, Logback’s AsyncAppender is non-blocking by default, meaning it will likely drop messages for the sake of performance. We configured Logback to not discard messages by setting discardingThreshold=0.

For Log4j 2’s asynchronous loggers, we performed separate tests with location info enabled and disabled. Including location info (such as class, method, and line) can cause asynchronous loggers to run as much as 30 – 100 times slower, and we wanted to emphasize this difference.

Test results

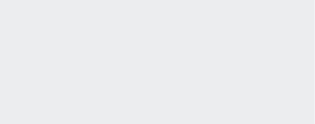

File appender

As in our first test, Logback performed faster in both synchronous and asynchronous logging when compared to Log4j 2 and JUL. With synchronous appenders, the difference between Logback and Log4j 2 is almost imperceptible. However, the difference between Logback and JUL is an incredible 11.68 seconds!

With asynchronous appenders, we saw an expected decrease in runtime for both Log4j 2 and Logback. Log4j 2 saw a 35% drop in runtime over synchronous logging while Logback saw a 46% drop. However, this boost comes with a cost, and Logback dropped 36% of its messages in the process.

| Framework | Time (ms) | Drop rate | |

|---|---|---|---|

| Synchronous | Log4j 2 | 3234 | 0% |

| Logback | 3150 | 0% | |

| JUL | 14827 | 0% | |

| Asynchronous | Log4j 2 | 2103 | 0% |

| Logback | 1695 | 36% |

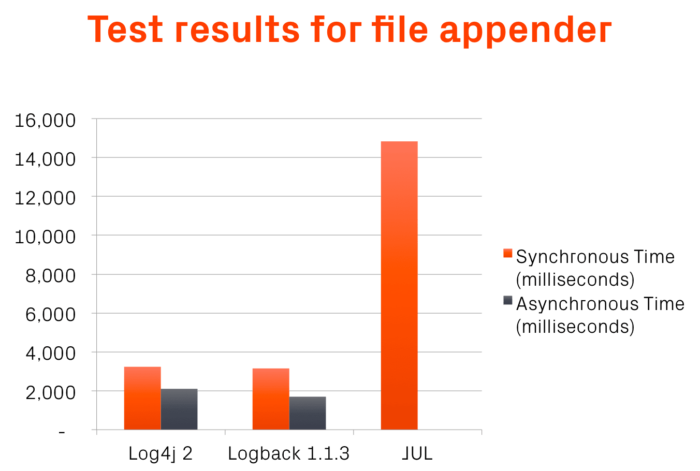

Syslog appender

Interestingly, our latest syslog tests saw significantly reduced drop rates from our previous tests for both Log4j 2 and Logback, and when using TCP in Log4j 2, there were no dropped messages at all. We also found that using TCP actually resulted in a performance boost of almost 40% for synchronous logging, making it the preferred choice when using Log4j 2.

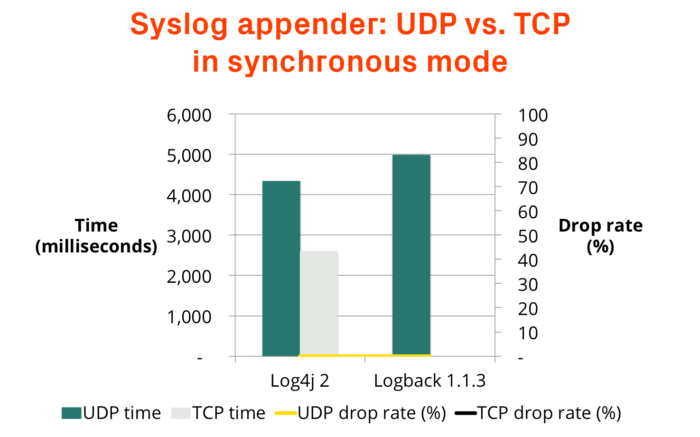

With UDP, using both frameworks asynchronously resulted in a faster runtime and a higher drop rate. Log4j 2 finished in just over three seconds, but dropped 3% of its messages in the process. Using TCP improved things somewhat, although it still fell behind the synchronous TCP appender. Logback, on the other hand, saw an impressive 54% improvement in runtime, but also a 61% drop rate.

| Framework | Time (ms)—UDP | Drop rate—UDP | Time (ms)—TCP | Drop rate—TCP | |

|---|---|---|---|---|---|

| Synchronous | Log4j 2 | 4335 | < 0.1% | 2605 | 0% |

| Logback | 4979 | < 0.1% | - | - | |

| Asynchronous | Log4j 2 | 3086 | 3% | 3185 | 0% |

| Logback | 2312 | 61% | - | - |

Asynchronous loggers

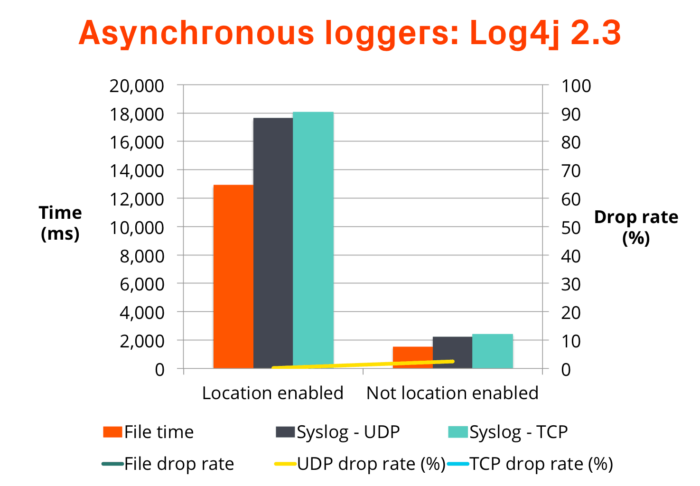

Log4j 2.3 introduced the concept of asynchronous loggers, which are designed to provide higher log throughput, lower latency caused by logging calls, and faster overall application performance. The key difference between asynchronous loggers and asynchronous appenders is the use of the LMAX Disruptor library instead of a queue. This has the effect of returning control from the logging framework back to the application faster than using an asynchronous appender.

By default, asynchronous loggers have a buffer with a capacity of 256K events. Events exceeding that buffer are sent directly to the underlying appenders. We increased the buffer size to 1M messages to prevent this from happening.

We also found that asynchronous loggers perform significantly better when location information (class name, calling method, etc.) is excluded from log events. The performance impact of retrieving location data for an asynchronous logger can be 30 – 100 times slower. We tested with and without location information enabled in order to better highlight the difference.

| File | Syslog - UDP | Syslog - TCP | ||||

|---|---|---|---|---|---|---|

| Location enabled? | Time (ms) | Drop rate | Time (ms) | Drop rate | Time (ms) | Drop rate |

| Yes | 12924 | 0% | 17645 | <0.1% | 18058 | 0% |

| No | 1534 | 0% | 2244 | 2.5% | 2407 | 0% |

Keep in mind that the throughput of asynchronous loggers increases with the number of threads. The Log4j 2 team’s own internal testing shows an exponential increase from a single thread up to 64 threads, whereas both synchronous and asynchronous appenders remain relatively constant.

Recommendation

The combination that provided the best performance and reliability is Log4j 2’s FileAppender using an asynchronous logger. It finished in half the time of Log4j 2’s synchronous logger without dropping any events. You can see the configuration for this setup below.

<?xml version="1.0" encoding="UTF-8"?>

<Configuration>

<Appenders>

<File name="File" bufferedIO="true" fileName="logs/log4j2-file-sync-${date:HH:mm:ss.SSS}.log">

<PatternLayout pattern="%d %p %m%n" />

</File>

</Appenders>

<Loggers>

<Root level="all" includeLocation="false">

<AppenderRef ref="File" />

</Root>

</Loggers>

</Configuration>

In second place was Log4j 2’s FileAppender using an AsyncAppender, and finally Logback’s FileAppender. However, we cannot recommend Logback’s FileAppender using an AsyncAppender due to the large number of dropped events.

Compared to the previous test, all frameworks saw an increase in runtime. This is likely due to an increase in the complexity of the test application. If you would like to try the test yourself, the source code is publicly available on GitHub. You can also find the configuration files for each test by clicking here.

Additional links

Configuring Logback using syslog for Loggly

Benchmarking Java Logging Frameworks (2015 post)

The Loggly and SolarWinds trademarks, service marks, and logos are the exclusive property of SolarWinds Worldwide, LLC or its affiliates. All other trademarks are the property of their respective owners.

Andre Newman