New and improved Amazon S3 ingestion

Amazon Web Services customers use Amazon S3 for diverse functions—from application, media, and software hosting to backup and storage, among others, with the ultimate goal of minimizing their online storage costs. In addition, Amazon services like Amazon CloudFront, Amazon Elastic Load Balancing, and AWS CloudTrail take advantage of Amazon S3 for their own purposes. For Loggly, Amazon S3 functions both as an archiving repository, as well as a data source for importing mission-critical log data that enriches our customers’ analyses.

What happens in S3 shouldn’t necessarily stay in S3. That’s why we have made it easier than ever to send these logs to Loggly.

How to send logs from Amazon S3 to Loggly

Previously we offered an AWS Lambda script that sent logs to Loggly from Amazon S3. However, this required customers to set up and run the script at their own expense. Furthermore, Amazon does not offer support for AWS Lambda in all regions.

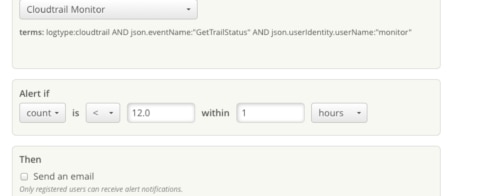

Now, Loggly will automatically download new objects that you create in S3. The Amazon S3 notification feature will send notifications to Amazon Simple Queue Service (SQS) when an object is created in your bucket. Amazon SQS is a fully-managed message-queuing service for reliably communicating among distributed software components and microservices at scale. You can get started with SQS using AWS console with a few simple commands, and all AWS customers can make 1 million Amazon SQS requests per month free of charge.

Amazon S3 APIs such as PUT, POST, and COPY can create an object in your S3 bucket. The S3 notification contains information about the key of the object and the S3 bucket in which it resides. The Loggly S3 ingestion tool will read those notifications from your SQS queue, read the key and bucket information of an object in that notification, download that object from S3, and ingest it into Loggly. It offers:

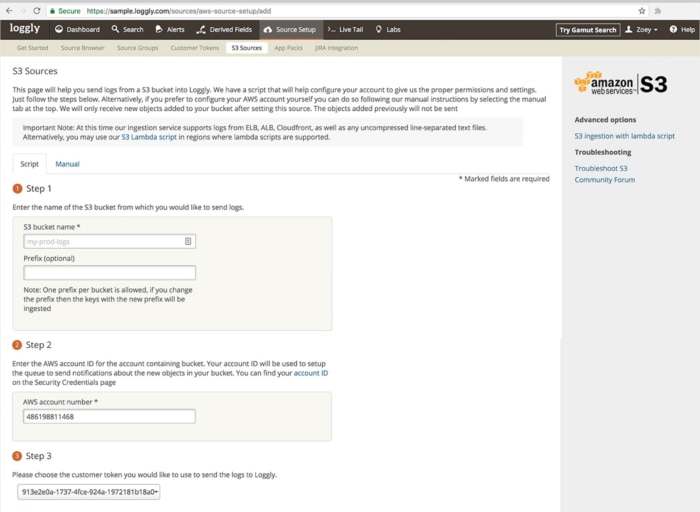

- Support for logs from Amazon ALB, Amazon ELB, and Amazon CloudFront, as well as any uncompressed line-separated text files

- An expanded list of supported AWS regions including the US and EU

We have provided an automatic script that will configure your account to send logs from your Amazon S3 buckets to Loggly. It will create the Amazon SQS queue in your account, link it to your S3 bucket, and provide Loggly access to read from that SQS queue and S3 bucket. Alternatively, you can configure your AWS account using manual setup instructions.

Once the data is ingested by Loggly, you can analyze it with the full set of analysis capabilities available in our log management service.

Solve more operational problems now

Amazon S3 log ingestion is available to all Loggly customers. So check out Loggly anytime, and know that your logs will never be stuck at the Hotel California.

The Loggly and SolarWinds trademarks, service marks, and logos are the exclusive property of SolarWinds Worldwide, LLC or its affiliates. All other trademarks are the property of their respective owners.

Pranay Kamat Pranay Kamat is Senior Product Manager at Loggly. His previous experiences include designing user interfaces, APIs, and data migration tools for Oracle and Accela. He has an MBA from The University of Texas at Austin and Master's degree in Computer Science from Cornell University.